The Real Dangers of Social Media

When I was a kid, there were serious discussions in churches, schools, and in front of Congress about whether the music my friends and I listened to might be satanic.

I remember my friend’s mother making him throw away all of his Metallica tapes. I remember hiding the parental warning labels when asking my mom to buy the new Pantera album. I can still see my father breaking my Bone Thugs-N-Harmony CD in half when he realized they dropped more F-Bombs than Nixon in Cambodia.

By the time I reached adolescence, the grown-ups had moved on from offensive music and commenced their hysterics over the corruptive forces of violent video games. The Columbine Massacre in 1999 was peak hand-wringing about violent entertainment. Back then, school shootings were still a rare occurrence. And it was only fitting that such an incomprehensible act be explained by such a new and incomprehensible form of entertainment.

Today, we chuckle at the hair metal bands of the late eighties as innocent fun while the shocking hip hop of the early nineties has evolved into a cornerstone of our modern culture. And after hundreds of studies across multiple decades, the American Psychological Association reports that they still haven’t found any evidence that playing video games motivates people to commit violence.1

Time has resolved our collective anxiety. The new has become the old, the shocking has become the expected. Yet, today we find ourselves in the grips of another moral panic—this time around social media.

Table of Contents

The New Culprit

The instruments have changed, but the song remains the same, “Have Smartphones Destroyed a Generation?” reads one headline in The Atlantic. “Social Media Can Steal Childhood” reads another in Bloomberg Businessweek. Author Jaron Lanier states in his book, Ten Arguments for Deleting Your Social Media Accounts Right Now, that, “We’re being hypnotized by little technicians we can’t see, for purposes we don’t know. We’re all lab animals now.” In his New York Times bestselling book, Digital Minimalism, productivity author and Georgetown professor Cal Newport went even further, declaring that Big Tech companies are, in fact, “tobacco farmers in T-shirts selling an addictive product to kids.”

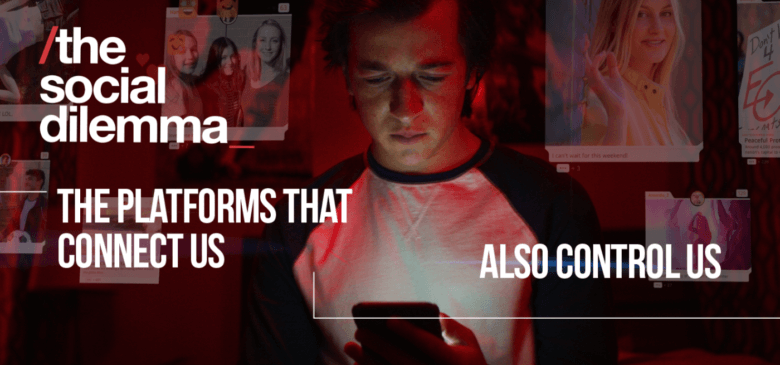

But nothing and no one has hit that “sky is falling” pitch of hysteria quite like the recent Netflix film, The Social Dilemma. I would call it a documentary except that there is a conspicuous absence of any data or actual scientific evidence in it. Instead, we’re treated to fictionalized reenactments of the repeated warnings given by tech industry “experts,” all of whom simply repeat and reinforce one another’s opinions for 94 minutes.

Like a Donald Trump speech, The Social Dilemma accuses everyone else of doing exactly what it, itself, is guilty of doing. The film is an echo chamber of people all sharing the exact same perspective with no dissent whatsoever. The tech author and social media defender, Nir Eyal, has told me that the entirety of his three-hour interview was left out of the film, as was all but about ten seconds of the interview with another skeptic of social media criticism, Jonathan Haidt.

But aside from muting dissent, the film freely indulges in a grotesque fantasy of how social media can corrupt your average American family. It shows a hapless son who is driven to depression and radical political beliefs by a few YouTube videos. A daughter who is plagued by self-image issues and insecurity due to an Instagram-like app. A family that can’t talk or be together because of their constant phone interruptions. And an evil genius super-algorithm that laughs maniacally as it determines what ad to show to each person (no, seriously).

And why does the film employ all these silly tropes and exaggerated claims? That’s right, to capture your attention and keep it by creating hysterical, paranoid claims about some evil “them” that’s out to get you.

Sound familiar?

Carl Jung said we criticize in others that which we hate most about ourselves. And there’s no richer irony than Netflix, the king of addictive algorithms, financing a feature-length documentary to criticize all those other addictive algorithms along with claims that they’re evil.

Social media is the favorite punching bag for all of our social ills these days. And look, I get it. All it takes is about six minutes on Facebook to discover that you intensely hate humanity in a way that you never thought possible. I, too, have been tempted in the past to join in on the anti-social-media crusade, and shit all over the big tech companies to my intestinal delight.

The problem is the data.

See, there’s been research on social media and its effects on people. Lots of it. They’ve studied how it affects adults, how it affects children, how it influences politics and mood and self-esteem and general happiness.

And the results will probably surprise you. Social media is not the problem.

We are.

Get Your Shit Together — Here’s How

Your information is protected and I never spam, ever. You can view my privacy policy here.

Three Common Criticisms of Social Media That Are Wrong

Criticism One: Social Media Harms Mental Health

It is true that over the past two decades, we have seen a worrying increase in rates of suicide, depression, and anxiety, especially in young people. But it’s not clear that social media is the cause.

A lot of scary research on social media usage is correlational research. That means researchers simply look at how much time people spend on social media, then they look at whether those people are anxious and/or depressed. They then look to see if the same people who are doomscrolling Facebook all day are the people who are anxious and depressed. Most results have found that they are.

For example, a 2018 study found a correlation between social media screen time and increases in depressive symptoms and suicide attempts.2 This is just one of many correlational studies finding the same result: lots of social media usage = lots of depressed teenagers.

Sounds pretty bad, doesn’t it?

The problem with studies like this is that it’s a chicken-and-egg situation. Is it that social media causes kids to feel more depressed? Or is it that really depressed kids are more likely to use social media?

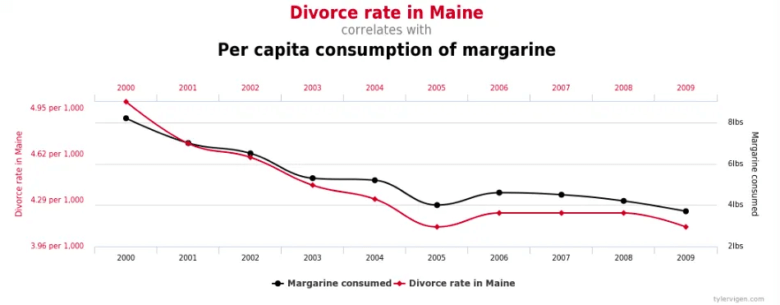

This is the limit of correlational studies. They simply show you that two things are occurring at the same time. They don’t tell you if those two things are related or not. For example, the divorce rate in the state of Maine is highly correlated with the consumption of margarine. But obviously, nobody thinks that margarine is the leading cause of divorce.

The truth is, correlational studies kind of suck. They are really basic and the results aren’t that useful. So why do people do them?

Well, people do them because they’re easy. It’s very easy to round up a few hundred kids, ask them how much they use social media, then ask them if they feel anxious or depressed, and create a spreadsheet. It’s much, much harder to round up thousands of kids, track them over the course of a decade and calculate how any shifts or changes in their social media usage actually affect their mental health over the years. That would require a lot of time and money and researchers. But that’s also how you would actually know if social media causes mental health problems.

Well, researchers with a lot of time and money have run those longitudinal studies, and the results are in:

- Researchers at Brigham Young University tracked the social media usage and mental health of 500 subjects, between ages 13 and 20, from 2009 to 2017. Over half of the subjects used social media every day during that period. Many of them used it for at least an hour each day. After eight years, the researchers found no connection between depression/anxiety and social media use.3

- A similar study was done in Finland, this time tracking 2,891 adolescents between 2014 and 2020. Again, they found no causal link between social media usage and symptoms of depression/anxiety.4

- Another version of this study was done with 600 high school and college students in Canada. This one also found that social media usage did not predict depressive symptoms.5

But what about FOMO? What about Facebook-stalking? What about the envy of seeing how awesome your friends’ lives are?

A German study following 514 people for over a year found that the more depressed or anxious the social media user, the more likely they were to “stalk” other users or indulge in envy of other people’s lives. The same Canadian study mentioned above also found that depressive symptoms in girls predicted their social media usage. Therefore, the researchers are leaning towards the conclusion that it’s anxiety and depression that drives us to use social media in all the horrible ways we use it—not the other way around.6

Basically: the fucking egg came first. Anxiety/depression leads to greater amounts of envious and voyeuristic social media usage. The more eggs you’ve got rattling around in your head, the more likely you are to sit there and gawk at the latest, greatest narcissistic fuckwit posting their beach selfies on your feed.

Then, there’s the studies you never hear about. Like the one from 2012 that found posting status updates on Facebook reduces feelings of loneliness.7 Or the one from earlier this year that found activity on Twitter can potentially increase happiness.8 Or this one that found that active social media use actually decreases symptoms of depression and anxiety.9

Those of us who were around in 2004 can remember why social media was such a big deal in the first place—it connected you to everybody in your life in a way that was simply impossible in the before-times. And those initial benefits of social media are so immediate and obvious that we’ve likely become inured to them and take them for granted.

Especially because in the last ten years, politics has gotten in the way…

Criticism Two: Social Media Causes Political Extremism or Radicalization

The past decade has seen a rise in populist movements around the world. It’s also seen larger and more frequent public protests, mainstream adoption of conspiracy theories and really fucking obnoxious Twitter arguments. Considering so much political discourse occurs on social media, it’s logical to assume that social media might be the cause of all our troubles.

But three facts make it unlikely that social media is the culprit:

- Studies show that political polarization has increased most among the older generations who use social media the least. Younger generations who are more active on social media tend to have more moderate views.10

- Polarization has been widening in the United States and many other countries since the 1970s, long before the advent of the internet.11

- Polarization has not occurred universally around the world. In fact, some countries are experiencing less polarization than in previous decades.12

There are plenty of explanations for growing political polarization and populism that don’t involve social media. Growing income inequality is the most obvious explanation. Divergences in educational attainment in different age demographics. Growing immigrant populations and multiculturalism. Globalization and stagnant wages. And so on and so forth.

But what about the disinformation and conspiracy theories?

Well, research shows that despite the proliferation of “fake news,” most people don’t fall for it. In fact, most fake news gets shared not because people think it’s true, but simply because it wins them cool points with their social media friends.13

(Yeah… people suck.)

Not only that, research shows that most fake news doesn’t originate on social media, it actually originates on televised news.14

Which actually makes sense. Fake news is hardly anything new. Back in the 18th and 19th century, people would anonymously publish newspapers and pamphlets spreading horrible rumors about their political opponents. In the 1790s, one newspaper, secretly financed by Thomas Jefferson, wrote slanderous op-eds claiming that George Washington was going to declare himself king of the new republic. During the Civil War, southern newspapers claimed that Abraham Lincoln was not only going to abolish slavery, but force whites and blacks to intermarry.

As for political extremists, you don’t have to read much history to discover that political extremists are the rule, not the exception. How quickly we forget the “Red Scare” McCarthyism in the 1950s, or the bombings by left-wing revolutionaries that ravaged government buildings and universities in the 1970s, or the socialists who were imprisoned for their beliefs in the 1910s.

None of this shit is new.

Criticism Three: Big Tech Companies Are Profiting Off the Mayhem

From soccer moms to politicians to Cardi B, the Big Tech companies of Silicon Valley have become everybody’s favorite punching bag. Mark Zuckerberg has been called in front of Congress four times in the past couple of years to answer for… well, I’m still not sure exactly what. Top executives from Twitter, Google, Apple, and Microsoft have similarly been called to Washington to be burned at the proverbial stake for the public’s satisfaction.

The assumption here is that social media is destroying the fabric of society, and Big Tech is gleefully cashing in on it.

But social media is not destroying society, and even if it was, Big Tech is not fanning the flames. They’re actually spending a lot of money trying to put it out.

These companies have spent billions in efforts to fight back against disinformation and conspiracy theories. A recent study to see if Google’s algorithm promoted extremist alt-right content actually found the opposite: the YouTube algorithm seemed to go out of its way to promote mainstream, established news sites far more often than its fringe looney figures.15

Similarly, last year Facebook banned tens of thousands of conspiracy theorist and terrorist groups. This has been part of their ongoing campaign to clean up their platform. They’ve hired over 10,000 new employees in the past two years just to review content on the site for disinformation and violence. They’ve also become absolutely draconian in banning ad accounts in the last year. Leading up to the election, hundreds, if not thousands, of legitimate businesses saw their ad accounts shut down with no explanation.

Not only is Facebook not making a profit off disinformation, they are undoubtedly losing lots of money trying to clean it up. Whether you agree with their policies or editorial decisions, you can’t argue that they’re standing by doing nothing.

Yeah, But Clearly Something’s Not Right… So What Is It?

When I was in high school, one year at Thanksgiving, my cousin James wouldn’t shut the fuck up about something called “Y2K.” He explained that for some reason, all of the computers in the world weren’t properly programmed to handle dates that didn’t begin with “19xx,” and with the year 2000 coming up, this was bound to be a problem. Yada, yada, yada, therefore, reasons and things, all the computers—the electric grid, the banking system, government computers, everything—would all shut down at the same exact moment on New Years and the world would come to an end. Pure armageddon.

The family sat there staring at him, aghast. Some politely asked questions. Most of us were just kind of confused. James launched into a deadly serious to-do list of everything we in the family must do in the five weeks between then and Y2K: buy thousands of dollars’ worth of canned food and bottled water. Preferably buy or rent a piece of property that existed underground. Get guns and ammunition. Gold bars. Build a bunker or make friends with someone who already had one.

Eventually, one by one, members of the family proceeded to tell my cousin that he was out of his fucking mind. He got upset. Said he was worried about our safety. But then the adults at the table changed the subject and we started talking about gravy recipes or something.

We never heard about Y2K again, and to my knowledge, nobody (including my cousin) bought canned food or bottled water or dug themselves a bomb shelter in the middle of the Texas hill country. There were bugs related to the dating of computer software, but most of it was patched well before the clock struck midnight. Nothing happened. People partied like it was any other New Years. And now Y2K is nothing more than a punchline for those of us who remember it.

Back in the 90s, conspiracy theories like my cousin’s were just as common as they are now. The difference was that they were far less harmful because the social networks that existed at the time cut them off aggressively at the source. That night at Thanksgiving dinner, my family members cut my cousin off, ending his ability to spread his ideas.

But today, someone like my cousin goes online, finds a web forum, or a Facebook group or a Clubhouse room, and all the little Y2Kers get together and spend all of their time socializing and validating each other based on the shared assumption that the world is about to end.

Facebook didn’t create the crazy Y2Kers. It merely gives them an opportunity to find each other and connect—because, for better or worse, Facebook gives everybody the opportunity to find each other and connect.

Once these people have found each other and connected, because of their shared belief in the apocalypse or whatever, they become far more motivated to post and engage with others about their crazy ideas. Think about it, nobody who thought New Year’s Eve 1999 was going to be fine felt any reason to say anything that night. It was only my cousin who couldn’t shut up and dominated the conversation for the next hour.

This asymmetry in beliefs is important, as the more extreme and negative the belief, the more motivated the person is to share it with others. And when you build massive platforms based on sharing… well, things get ugly.

The 90/9/1 Rule

I’ve previously written about something called the Pareto Principle or the 80/20 Rule. It’s a “rule” that states that 80% of results come from 20% of the processes. I.e., 80% of a company’s revenue will often come from 20% of its customers; 80% of your social life is probably spent with 20% of your friends; 80% of traffic accidents are caused by 20% of the drivers; 80% of the crime is committed by 20% of the people. Etc.

(Note: The 80/20 rule doesn’t always shake out to exactly 80/20 every time, but the principle holds—the majority of output is generated by a minority of input.)

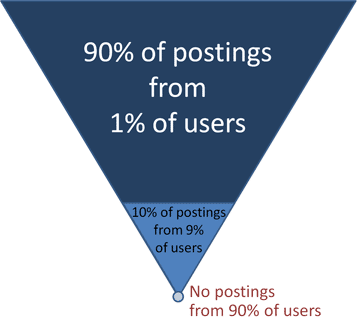

People who have studied social networks and online communities have found a similar rule to describe information shared on the internet. They’ve dubbed it the “90/9/1 Rule.”

The 90/9/1 rule finds that in any social network or online community, 1% of the users generate 90% of the content, 9% of the users create 10% of the content, and the other 90% of people are mostly silent observers.

Let’s call the 1% who create 90% of the content creators. We’ll call the 9% the engagers—as most of their content is a reaction to what the 1% is creating—and the 90% who are merely observers, we’ll refer to as lurkers.

Research on social media usage shows that people tend to only post things they care deeply about. Think weddings, graduations, children’s birthdays, and global conspiracies that will destroy the world. This means that most creators are people who are either incredibly passionate about the content or the content is a major part of their life.

Engagers tend to congregate around creators that they identify with and love. This is because their favorite creators (or, I guess, “influencers”) represent their ideas and values better than they could represent them for themselves. The engagers are the people who wish they could say what the creators are saying but don’t have the time/energy/courage/talent to do it. So these little tribes of engagers cluster around creators where they validate, support, and defend them against perceived threats.

Lurkers are people who are busy. They are people who have doubts, are unsure, or are skeptical. Lurkers can’t be bothered to respond to your comment because they have a diaper to change or dinner to cook and besides, who cares if the Illuminati planned 9/11 anyway? Doesn’t really change anything.

The resulting dynamic of social networks comes to reflect Bertrand Russell’s old lament: “The whole problem with the world is that fools and fanatics are always so certain of themselves, and wiser people so full of doubts.”

The creators are largely the fools and fanatics who are so certain of themselves. They are people like my cousin James posting about the end of the world. They are disproportionately the finger-waggers, moralizers, and doomsayers. It’s not necessarily the platform’s algorithms that favor these fanatics—it’s that human psychology favors these fools and fanatics and the algorithms simply reflect our psychology back to us.

Meanwhile, the lurkers—the 90%—are people who are more or less reasonable. And because they are more or less reasonable, they don’t see the point in spending their afternoon arguing on Facebook. They aren’t sure of their beliefs and remain open to alternatives. And because they are open to alternatives, they are hesitant to publicly post something they may not fully believe.

As a result, the majority of the population’s beliefs go unnoticed and have little influence on the overarching cultural narrative.

This is why the internet turns into this bizarro world where reality gets distorted and flipped on its head.

- Issues that are important to small but loud minorities dictate the discussion of the majority. Last year, arguments over transgender bathrooms dominated Twitter and consumed large amounts of air time on television, resulting in a “cancelling” of J.K. Rowling. This is despite the fact that roughly half of one percent of the US population identifies as transgender. Meanwhile, discussions about issues that do affect the vast majority of the population, such as infrastructure, healthcare, homelessness, mental health, etc. remain all but ignored.

- Because radical and unconventional views exert a disproportionate influence online, they are mistakenly seen as common and conventional. The vast majority of Americans complied with mask mandates during the pandemic, yet the popular perception in much of the US is that most people did not. Similarly, there’s a perception that much of the younger generations are “woke” and subscribe to Critical Race Theory, whereas polling consistently shows these views to be incredibly unpopular, across all parts of society.

- People develop extreme and irrational levels of pessimism. Because creators online tend to be the doomsayers and extremists, the overall perception of the state of the world skews increasingly negative. Polling data shows optimism in much of the developed world to be at all-time lows despite the fact that by almost every statistical measurement—wealth, longevity, peace, education, equality, technology, etc.—we live in the best time in human history, and it’s not even close.

Much of this can be summed up in the simple phrase: social media does not accurately reflect the underlying society.

This sounds obvious on the surface, yet I find that most of us forget it over and over again. We assume the horrible news story being passed around on Facebook is true. We see the horrendous stream of comments below and think to ourselves, “People are shit.” We see a video clip of some asshat telling another asshat that unless they hate every asshat of a certain hat then they must implicitly hate every other type of asshat.

But this is not reality. Social media does not reflect reality. Social media reflects a fun-house mirror of society, one that elongates and exaggerates the crazy and extraordinary, while minimizing and compressing the sane and ordinary.

The result is we get a false narrative about what’s going on in the world. The world seems to be in a constant state of quasi-armageddon—yet you look out your window, walk the dog, call your mom, and everything is kinda fine.

Optimizing for Controversy vs Consensus

But there is one group of people whose lives have been completely ruined by social media: those who work in media and entertainment.

See, if you’re a journalist or a film producer or a radio broadcaster, social media has completely fucked your ass sideways. In fifteen short years, it has turned your profession, your company, your career, and therefore your life, upside down.

Why does this matter?

Because 99% of the information the rest of us get comes from people who work in media and entertainment.

Everyone who works at a newspaper or a magazine or on a TV show has completely had their lunch eaten by these new platforms. Therefore, they come to falsely assume that everyone’s had their lunch eaten by these platforms and they proceed to write about it as though it were true.

But really, ask yourself, how much has your in-the-flesh life actually changed since you’ve been active on Facebook or YouTube? With the exception of watching less TV or going to fewer movies, probably not much.

The truth is that unless you are part of the 1% creator class, things aren’t much different. What’s changed is simply where you’re getting your information and entertainment, and, of course, who is giving it to you.

A few generations ago, there were only a few television channels, a few radio stations, and a few international news services. Because the channels of information were so limited, everybody more or less got their information and entertainment from the same two or three sources.

Therefore, if you were in charge of one of these few channels of information, it was in your interest to produce content that appealed to as many people as possible.

So what we saw was a traditional media in the 20th century that largely sought to produce content focused on consensus. News was delivered in a way that everybody could agree on. TV shows were based on the most stereotypical families possible. Talk shows focused on topics everyone could relate to.

But with the internet, the supply of information exploded. Suddenly everyone had 500 TV channels and dozens of radio stations and an infinite number of websites to choose from.

Therefore, the most profitable strategy in the media and entertainment stopped being consensus and instead became controversy.

If people have 500 options, the way you get them to stick with you isn’t by treating them like everyone else—it’s by treating them differently than everyone else.

This optimization for controversy trickled down, all the way from politicians and major news outlets, to individual influencers on social media. The easiest way to get attention these days isn’t to post something profound or insightful—it’s to shit on someone else’s profundity and insight.

Politicians with more extreme views have more followers than moderates.16 The political discourse itself has gotten more polarized over time,17 and again, this appears to be due to a very small fraction of users with very large followings who produce more polarized content than the average user—i.e., the 1% of creators.18

The result is this fun-house-mirror version of reality, where you go online (or turn on cable news) and feel like the world is constantly falling apart around you but it’s not.

And the fun-house-mirror version of reality isn’t caused by social media, it’s caused by the profit incentives on media/entertainment in an environment where there’s way more supply of content than there is demand. Where there’s far more supply of news and information than there is time to consume it. Where there’s a natural human proclivity to pay more attention to a single car crash than the hundreds of people successfully driving down the highway, going on their merry way.

The Silent Majority

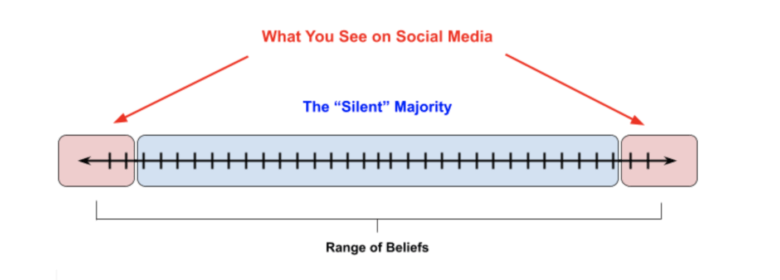

What we get is a cultural environment that looks like this:

Social media has not changed our culture. It’s shifted our awareness of culture to the extremes of all spectrums. And until we all recognize this, it will be impossible to have serious conversations about what to do or how to move forward.

You can affect the culture simply by shifting people’s awareness about certain subjects. The hysteria in the media over their irrelevance has pushed our culture to a place where we overestimate social media and underestimate our own psychology.

Instead, we must push our perception back to a more realistic and mature understanding of social media and social networks. To do this, it’s important each of us individually understands concepts such as The Attention Diet and the Attention Economy, that we learn to cut out the most news consumption, and, you know, maybe spend some more time outside.

Moral panics find a scapegoat to blame for what we hate to admit about ourselves. My parents and their friends didn’t ask why kids were drawn to such aggressive and vulgar music. They were afraid of what it might have revealed about themselves. Instead, they simply blamed the musicians and the video games.

Similarly, rather than owning up to the fact that these online movements are part of who we are—that these are the ugly underbellies of our society that have existed and persisted for generations—we instead blame the social media platforms for accurately reflecting ourselves back to us.

The great biographer Robert Caro once said, “Power doesn’t always corrupt, but power always reveals.” Perhaps the same is true of the most powerful networks in human history.

Social media has not corrupted us, it’s merely revealed who we always were.

- See: American Psychological Association (February, 2020) Resolution on Violence in Video Games.↵

- Twenge, J. M., Joiner, T. E., Rogers, M. L., & Martin, G. N. (2018). Increases in Depressive Symptoms, Suicide-Related Outcomes, and Suicide Rates Among U.S. Adolescents After 2010 and Links to Increased New Media Screen Time. Clinical Psychological Science, 6(1), 3–17.↵

- Sarah M. Coyne, Adam A. Rogers, Jessica D. Zurcher, Laura Stockdale, McCall Booth. (2020) Does time spent using social media impact mental health?: An eight year longitudinal study. Computers in Human Behavior, Volume 104, 106-160.↵

- Puukko, Kati; Hietajärvi, Lauri; Maksniemi, Erika; Alho, Kimmo; Salmela-Aro, Katariina. (2020). “Social Media Use and Depressive Symptoms—A Longitudinal Study from Early to Late Adolescence” Int. J. Environ. Res. Public Health 17, no. 16: 5921.↵

- Heffer, T., Good, M., Daly, O., MacDonell, E., & Willoughby, T. (2019). The Longitudinal Association Between Social-Media Use and Depressive Symptoms Among Adolescents and Young Adults: An Empirical Reply to Twenge et al. (2018). Clinical Psychological Science, 7(3), 462–470.↵

- Scherr, S., Toma, C. L., & Schuster, B. (2019). Depression as a Predictor of Facebook Surveillance and Envy: Longitudinal Evidence From a Cross-Lagged Panel Study in Germany. Journal of Media Psychology, 31(4), 196–202.↵

- Deters, F. große, & Mehl, M. R. (2013). Does Posting Facebook Status Updates Increase or Decrease Loneliness? An Online Social Networking Experiment. Social Psychological and Personality Science, 4(5), 579–586.↵

- Jiang, Q. (2021). Social Media Usage and the Level of Depressive Symptoms in the United States (SSRN Scholarly Paper ID 3672093). Social Science Research Network.↵

- Thorisdottir, I. E., Sigurvinsdottir, R., Asgeirsdottir, B. B., Allegrante, J. P., & Sigfusdottir, I. D. (2019). Active and Passive Social Media Use and Symptoms of Anxiety and Depressed Mood Among Icelandic Adolescents. Cyberpsychology, Behavior, and Social Networking, 22(8), 535–542. ↵

- Boxell, L., Gentzkow, M., & Shapiro, J. M. (2017). Greater Internet use is not associated with faster growth in political polarization among US demographic groups. Proceedings of the National Academy of Sciences, 114(40), 10612–10617.↵

- Desilver, D. (2014, June 12). The polarized Congress of today has its roots in the 1970s. Pew Research Center.↵

- Boxell, L., Gentzkow, M., & Shapiro, J. M. (2020). Cross-Country Trends in Affective Polarization (No. w26669). National Bureau of Economic Research.↵

- Pennycook, G., & Rand, D. G. (2021). The Psychology of Fake News. Trends in Cognitive Sciences, 25(5), 388–402.↵

- Tsfati, Y., Boomgaarden, H. G., Strömbäck, J., Vliegenthart, R., Damstra, A., & Lindgren, E. (2020). Causes and consequences of mainstream media dissemination of fake news: Literature review and synthesis. Annals of the International Communication Association, 44(2), 157–173.↵

- Ledwich, M., & Zaitsev, A. (2019). Algorithmic Extremism: Examining YouTube’s Rabbit Hole of Radicalization. ArXiv:1912.11211 [Cs].↵

- Hong, S., & Kim, S. H. (2016). Political polarization on twitter: Implications for the use of social media in digital governments. Government Information Quarterly, 33(4), 777–782.↵

- Garimella, K., & Weber, I. (2017). A Long-Term Analysis of Polarization on Twitter. ArXiv:1703.02769 [Cs].↵

- Shore, J., Baek, J., & Dellarocas, C. (2018). Network Structure and Patterns of Information Diversity on Twitter. MIS Quarterly, 42(3), 849–872.↵